DeepLobeJune 24, 2021Computer Vision , Deep Learning

Deep convolutional neural network (CNN) based image classification plays an essential role in seamlessly performing most of the challenges from disease diagnosis to predicting consumerism behavior. Using Deep CNN reduces the time and effort required to spend on extracting and selecting classification features manually. In recent times, deep CNN has been applied to image classification with large datasets. And the application of deep CNN optimized the accuracy by augmenting the description of latent concepts for pattern recognition. This paper introduces a deep learning framework for performing image classification using CNN and the importance of image classification.

Deep CNN (Convolutional Neural Networks) – an Overview

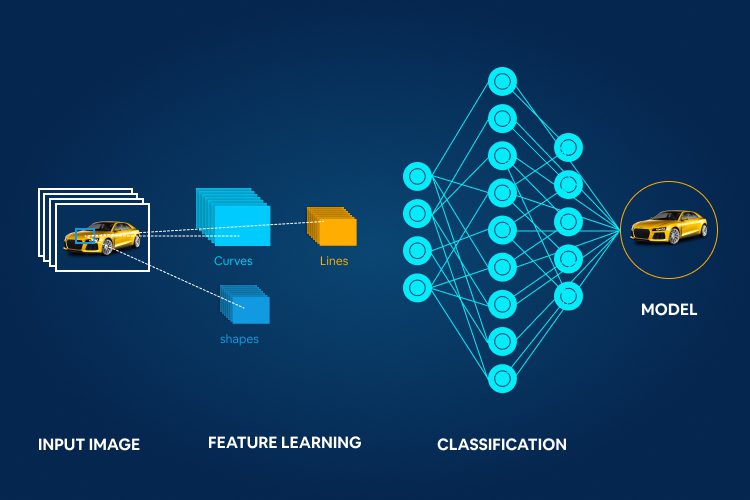

Convolutional neural networks are a kind of artificial neural network which are widely used in image recognition and processing tasks to specifically process pixel data. Convolutional neural networks mimic the operation of neurons in the human brain. Generally, the structure of CNN includes two layers – one is the feature extraction layer, and another is the local feature extraction layer. From the feature extraction layer, the input from each neuron moved through the connection layer to the local-respective fields of the previous layer. Later, the system extracts the local feature for prediction and confirmation of the output.

The advantage of CNN compared to other deep learning-based neural networks is that it automatically detects the vital features without human intervention or supervision. Such the training and application of any image classification model using Deep CNN can be easier than ever. For instance, if the CNN-based image classification model is provided with many images of goat and deer, it can learn the key features for each class by itself.

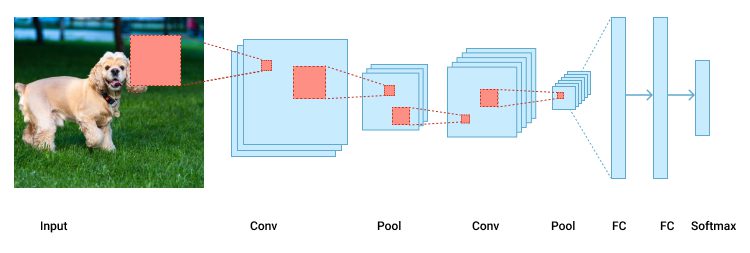

All CNN models follow a similar architecture. As discussed above, every convolutional neural network passes information from one neural network to another. If there is an input image that we are working with, we perform a set of convolutional along with pooling operations, followed by a sequence of fully connected layers to extract the output (AKA softmax). The below figure is for your understanding.

The main building block for any CNN application is the convolutional layer. The convolution layer is a mathematical representation to merge two sets of information. Using convolutional filters, we can produce a feature map by providing stride specifications (how much we are willing to move the convolutional filter at each step), and padding to maintain the same dimensionality. And the same procedure is followed by entire convolutional operations to gain multiple feature maps from distinct filters. And for multiple image classification, we perform multiple convolutions on inputs, with different filters on each dimension to result in a distinct feature map.

For instance, if we used ten distinct filters on an image, we would have ten feature maps, where each feature value is representing one filter type. To achieve a true potential of a CNN, we embrace the relu function for better accuracy in the final feature maps.

Pooling is usually performed to reduce dimensionality. By performing pooling after every convolutional operation, we can significantly reduce the number of parameters, which shortens the training time and combats overfitting. Pooling reduces the height and width of every feature map while keeping the depth intact. The most common type of pooling used is max pooling, and similarly, we provide a defined stride value for every window as we did in the convolutional operation. Hyperparameters that are considered for performing any convolutional operation are filter size, filter count, stride value, and padding.

After the convolution + pooling layers, we add a couple of fully connected layers (feed-forward neural networks). Fully connected layers in a neural network are those layers where all the inputs from one layer are connected to every activation unit in another layer to form the final outputs. Usually, a CNN structure uses two fully connected layers, followed by an output layer.

Importance of Image Classification in Computer Vision Applications

The clear objective of any image classification architecture is to identify and portray the features occurring in an image in terms of an object for further analysis. And image classification is the most prominent part of any digital image analysis application. From image recognition to image analytics, an image classification is a primary tool, which is used to power a machine vision model to perform at scale. Below we have listed a few computer vision applications where image classification plays an important role.

1. Image Analytics

As organizations across the globe started realizing the possibilities of unstructured data and how to extract value from it, the growth for image analytics has seen a sudden hype. As 80% of the unstructured data is contained with images and videos, extracting information from these sources is providing endless opportunities. The potential of image analytics is leveraged across the industries within retail, entertainment, logistics, insurance, and more. And below are a few use cases for your understanding.

Airport security and surveillance

Imaging analytics is not only helping in evolving airport security but also helps the business to meet today’s demands like employing airport security solutions, preventing thefts in airports, video management systems to monitor airport infrastructure and alert passengers and staff, analytics to understand passengers behavior, people counting, etc.

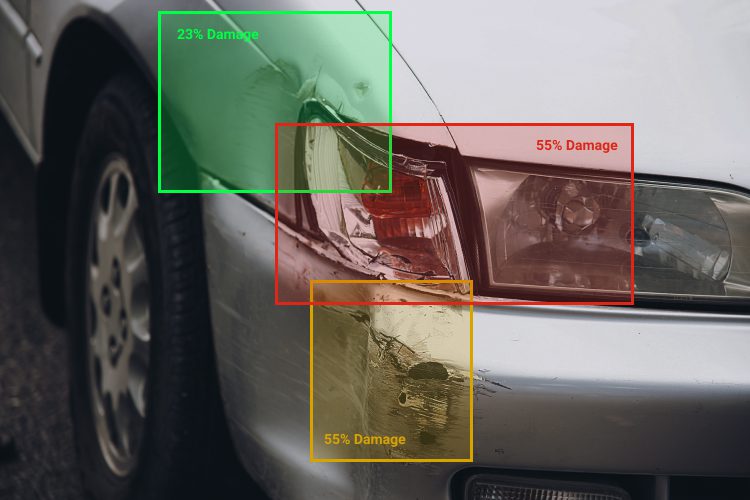

Real-time vehicle damage assessment

Using images taken at the accident spot we can perform automated vehicle damage detection that can greatly help insurance and finance companies in reducing costs for processing insurance claims.

Smart inventory and logistics supervision

Along with other industries, the logistics and fleet management business is also leveraging the advantages of computer vision and data analytics to track, trace, and monitor the inventory in real-time to mitigate all the challenges interconnected with logistics and fleet management.

2. Image Recognition

Image classification is often referred to as image recognition. There are countless examples of how image recognition algorithms are applied to allow machines to interpret and categorize what they “discover” within images or videos. Automated image recognition, user-generated content moderation, enhanced visual search, automated photo and video tagging, interactive marketing/creative campaigns are a few examples including image recognition and how it enriches user experiences but not limited to.

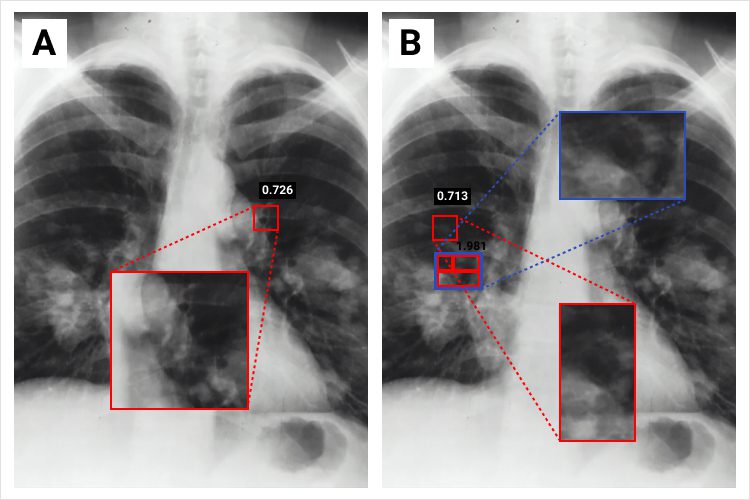

The image recognition application in medical image processing is on the rise. Optimizing clinical decision-making by identifying the types of patterns in defined patient diagnostic reports, image recognition is modernizing healthcare operations. By revolutionizing the art of diagnosis, image recognition is helping the healthcare fraternity to identify and diagnose most of the challenging diseases like melanoma, other types of cancers, heart diseases at the early stage for better cure and recovery.

Image recognition technology is also boosting the performance of applications embraced within automated vehicles and driving the future of the automotive industry. We know that computer vision is one of the vital technologies fueling the self-driving vision of the automotive industry. And along with it, this technology is also enhancing safety by identifying objects, signs, sharp turns, people, moving objects, etc., on the roads for the optimized driving experience.

The application of image recognition is not limited to any form. For instance, the recent discovery to improve augmented reality in gaming is a rapidly progressing application of this technology. The gaming sector is readily progressing, and one of the main reasons for its growth is embracing image recognition technology. Pokemon Go is one such example to know how this technology is leveraged at scale.

3. Face Recognition

As mentioned above image recognition is mostly leveraged in every industry in one or another form in today’s digital world. Face recognition is also one of the potential applications of image recognition and processing technology. Face recognition is defined as biometric technology that goes beyond recognizing a human face in digital images. Below we have two mostly used facial recognition use cases for your better understanding.

Biometric identification

Face recognition is widely used for biometric authentication and is already being used to unlock phones, surveillance purposes in banks, retail, stadiums, airports, etc., and also in specified applications. The recent advancement in biometric authentication technology is iris recognition, and it is one of the most futuristic image recognition technologies to reduce crime rates and prevent frauds.

Consumer behavior analysis

Consumer behavior analysis allows businesses to study and understand how people make buying decisions regarding products, services, or organizations. Employing face recognition technology, we can advance the research to the next level by analyzing consumer emotions and making necessary decisions to effectively influence negative behaviors into positives. Such marketing campaigns can be improved to gain better-monetized value.

Deep CNN for large-scale image recognition(VGG-16) is one of the most popular pre-trained models for image classifications (because of its high accuracy). Working with Deep CNN architectures is one of the classical approaches. With time and research, as discussed above the image classification technology along with its sister technologies like object recognition, face recognition gained remarkable importance to extract features from images.

If you are willing to employ image classification or image recognition technology into your business to scale your both business and consumer-centric outcomes, then DeepLobe is your “go-to” place. To learn more about DeepLobe technologies or to know more about computer vision technologies consult with our expert here.